How To: Test Disk I/O with dd

Screen Shot 2019-01-05 at 17.29.36.png dd command, that is pretty much guaranteed to be pre-installed on your Linux or Unix server, can be used to quickly get an understanding of the I/O capability of available storage.

Although there are specialised file processing and I/O benchmarks, you may not always have the time or permission to install additional packages. That’s why using is one of the easiest ways to understand the storage you’re working with.

Test write speed using dd

In this example, I’m creating a 1GB file using a fairly large block size of 512KB:

greys@s5:~ $ dd if=/dev/zero of=./test bs=512k count=2048 oflag=direct

2048+0 records in

2048+0 records out

1073741824 bytes (1.1 GB) copied, 3.11501 s, 345 MB/s

greys@s5:~ $ dd if=/dev/zero of=./test bs=512k count=2048 oflag=direct

2048+0 records in

2048+0 records out

1073741824 bytes (1.1 GB) copied, 3.01872 s, 356 MB/sThat’s a pretty impressive throughput! If the filesystem we’re testing this on is hosted on a single disk, it must be an SSD one. Similar results may be achieved using a software RAID from HDDs.

Test read speed using dd

If you apply logic and reverse the if and of parameters from the previous example, you will arrive at the following dd command testing the speed of reading from ./test file:

greys@s5:~ $ dd if=./test of=/dev/zero bs=512k count=2048 oflag=directIf you try running it though, you’ll have 2 problems.

Problem 1: you get an error if you attempt direct I/O (oflag=direct) with a virtual device like /dev/zero:

greys@s5:~ $ dd if=./test of=/dev/zero bs=512k count=2048 oflag=direct

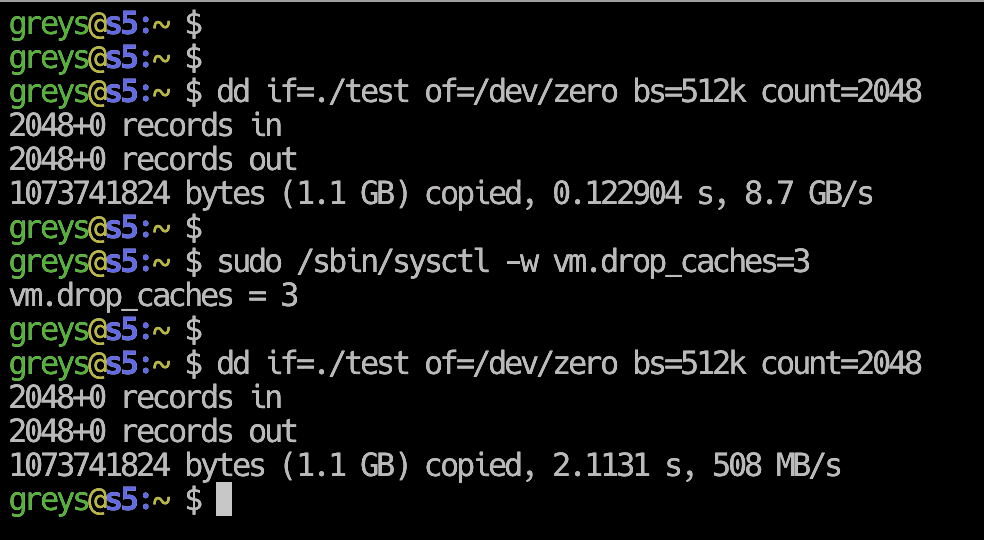

dd: failed to open '/dev/zero': Invalid argumentProblem 2: even if we remove the oflag=direct, results seem to be too good to be true:

greys@s5:~ $ dd if=./test of=/dev/zero bs=512k count=2048

2048+0 records in

2048+0 records out

1073741824 bytes (1.1 GB) copied, 0.159449 s, 6.7 GB/s

greys@s5:~ $ dd if=./test of=/dev/zero bs=512k count=2048

2048+0 records in

2048+0 records out

1073741824 bytes (1.1 GB) copied, 0.152424 s, 7.0 GB/sSlightly better I/O for reading is always expected, but such a dramatic improvement is usually false. As you may have guessed, we get such high numbers because of I/O caching that OS cleverly applies when working with files.

Caching is done in such a way that kernel would cache I/O as long as it has unused memory. As soon as some process needs memory though, the kernel would release it by dropping some clean caches

So for the correct read speed test with dd, we need to disable I/O caching using this command line :

greys@s5:~ $ sudo /sbin/sysctl -w vm.drop_caches=3

vm.drop_caches = 3… and then re-run the same dd command again:

greys@s5:~ $ dd if=./test of=/dev/zero bs=512k count=2048

2048+0 records in

2048+0 records out

1073741824 bytes (1.1 GB) copied, 2.10861 s, 509 MB/sThat’s better! 509MB/s read throughput is in line with the 356MB/s write throughput.